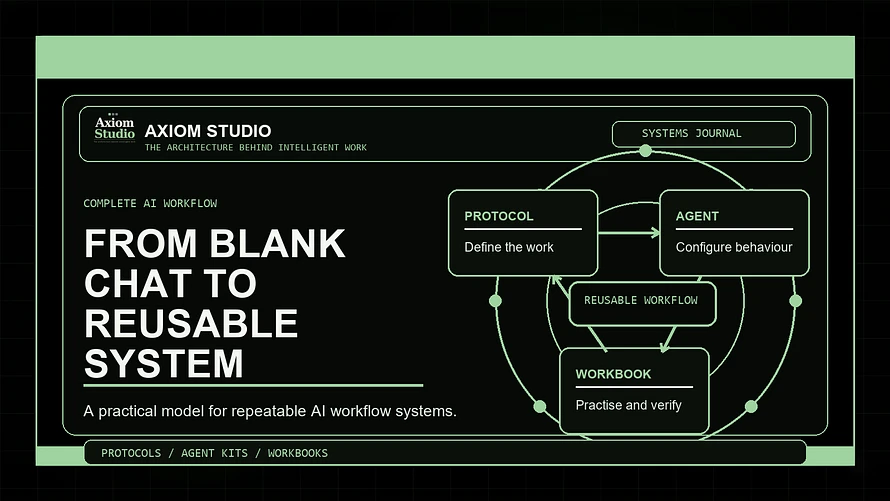

How protocols, agent kits, and workbooks turn AI from a useful tool into a repeatable way of working

Most people do not have an AI problem.

They have a workflow problem.

The AI is usually capable enough to help. The model can write, summarise, compare, draft, reason, plan, critique, restructure, classify, and explain. It can be useful in a dozen different ways before you have even finished your coffee.

The problem is that most AI sessions begin with the same fragile ritual:

Open a chat box. Ask for something. Hope the answer is good. Try again when it is not.

That is fine for exploration.

It is not enough for serious work.

If you are using AI for research, content, proposals, planning, customer support, business analysis, learning, technical delivery, or operational decisions, you need more than a clever prompt. You need a way of working that can survive Tuesday afternoon, a vague brief, missing context, three tabs of notes, and your own optimism.

That is where a complete AI workflow comes in.

At Axiom Studio, we think useful AI work is built from three core pillars:

- Protocols define the operating process.

- Agent Kits define the assistant behaviour.

- Workbooks build the human practice around the workflow.

Together, they turn AI from "a box you ask things" into a repeatable working system.

This article explains how that system works.

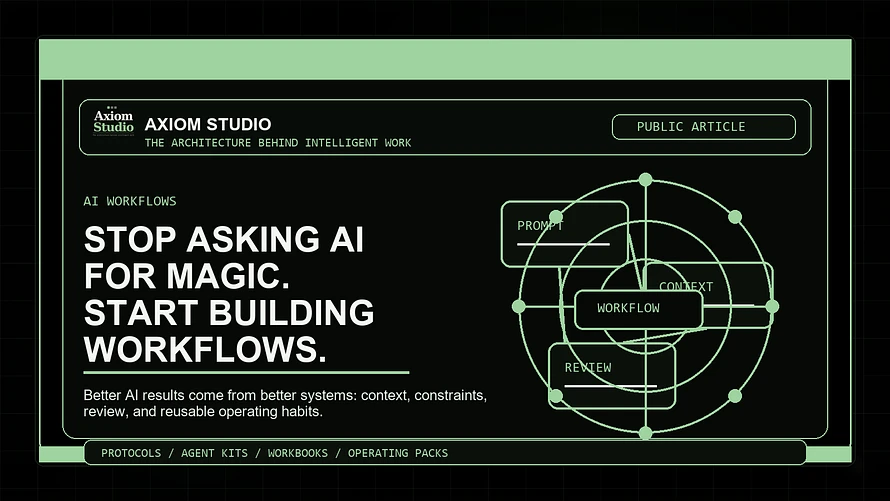

The old pattern: prompt, hope, repeat

Most people start with prompt-first thinking.

They ask:

"What should I type into the AI?"

That question is understandable. Prompts are visible. Prompts feel like the thing you control. A better prompt can absolutely improve the result.

But prompt-first thinking has a ceiling.

It often creates sessions like this:

- The first answer is too broad.

- The second answer is better, but still misses context.

- The third answer sounds confident, but you are not sure whether it is true.

- The fourth answer is closer, but now the conversation has become messy.

- The fifth answer is something you copy into a document called "final-final-new-v3", which is a cry for help disguised as file management.

The issue is not that the prompt was bad.

The issue is that the workflow was never designed.

The better pattern: define the system before asking for output

A strong AI workflow does not begin with the prompt.

It begins with the work.

Before you ask AI to produce anything, define:

- what task you are actually running

- what good output looks like

- what context the AI needs

- what role the AI should play

- what constraints matter

- what risks need review

- what format the result should arrive in

- what you will do with the output afterwards

This shifts the session from improvisation to structure.

Instead of asking AI to "help with content", you run a content production workflow.

Instead of asking AI to "summarise this research", you run a research synthesis workflow.

Instead of asking AI to "write a proposal", you run a proposal development workflow with scope, assumptions, risks, deliverables, and review gates.

The difference is huge.

A prompt asks for a result.

A workflow defines how the result should be created, checked, improved, and reused.

The complete AI workflow loop

A useful AI workflow has eight practical stages.

You do not need to make this complicated. You do need to make it visible.

1. Define the outcome

Start by naming the job.

Not the tool. Not the model. Not the prompt.

The job.

For example:

- Create a first draft of a client proposal.

- Compare three product ideas.

- Turn meeting notes into an action plan.

- Review an AI-generated article for weak assumptions.

- Build a reusable onboarding checklist.

- Analyse a business process for automation opportunities.

The clearer the outcome, the less the AI has to guess.

Good outcome definition includes:

- the intended result

- the audience or user

- the decision the output supports

- the quality level required

- the point where the work is considered finished

If you cannot define the outcome, the AI cannot reliably help you reach it.

2. Load the context

AI does not know what matters unless you tell it.

Context is not decoration. It is operating material.

Useful context can include:

- business goals

- audience details

- brand voice

- project notes

- customer pain points

- constraints

- examples

- source material

- past decisions

- preferred formats

- things to avoid

This is where many sessions fail. People give AI the task, but not the environment the task lives inside.

Then the AI fills the gaps with generic assumptions.

And generic assumptions are very polite. They arrive nicely formatted and pretend they were invited.

3. Choose the protocol

Once you know the outcome and context, choose the operating protocol.

The protocol is the workflow structure.

It defines:

- the task sequence

- the questions to answer

- the decision criteria

- the output stages

- the quality-control checks

- the handoff format

Different work needs different protocols.

A research workflow needs source discipline and evidence checks.

A content workflow needs audience, angle, structure, draft, revision, and repurposing steps.

A proposal workflow needs scope, assumptions, deliverables, risk review, and client-readiness checks.

An automation workflow needs task inventory, suitability scoring, failure impact, and human-review boundaries.

This is why Axiom Studio builds Protocols as a core product line. They give you the repeatable operating layer, so you are not rebuilding the same process every time you open an AI tool.

Explore Protocols: https://axiom-studio.co/collections/protocols

4. Configure the assistant

The protocol defines the process.

The assistant defines the behaviour.

This is where an Agent Kit becomes useful.

An Agent Kit gives the AI a specialist role, rules, boundaries, review habits, and output expectations.

For example, a research assistant should behave differently from a content strategist.

A quality reviewer should not simply praise the work. It should inspect it, challenge it, score it, and identify what needs revision.

A business analyst should diagnose a workflow before recommending tools.

A coding assistant should clarify intent, respect existing architecture, avoid unnecessary rewrites, and verify changes before calling the work finished.

Good assistant instructions tell the AI:

- what role it is playing

- what it should prioritise

- what it must avoid

- what questions it should ask

- how it should structure outputs

- when it should flag uncertainty

- how it should review its own work

This turns AI from a general-purpose response machine into a more reliable working partner.

Explore Agent Kits: https://axiom-studio.co/collections/agent-kits

5. Run the workflow in stages

Do not ask AI to do the entire job in one heroic leap.

That is how you get long, confident output with hidden cracks.

Run the workflow in stages.

For example:

- Clarify the task.

- Organise the context.

- Produce a first version.

- Review against criteria.

- Revise with constraints.

- Create the final handoff.

This staged approach creates more control.

It also makes it easier to see where the workflow is breaking.

If the output is weak, you can ask:

- Was the outcome unclear?

- Was context missing?

- Was the wrong protocol used?

- Were the assistant instructions too loose?

- Was the review step skipped?

- Was the output format poorly defined?

That is much better than staring at the screen thinking, "Well, the machine has produced words again."

6. Review before trusting

This is the step many people skip.

It is also the step that separates casual AI use from professional AI use.

AI output should be reviewed before it is trusted, published, sent, used in a decision, or built upon.

Review does not have to be dramatic. It just needs to be consistent.

Check for:

- missing context

- unsupported claims

- weak assumptions

- unclear recommendations

- poor fit for the audience

- hallucinated details

- overconfident language

- risk areas that need human judgement

- output that sounds good but does not actually solve the problem

This is where Axiom Studio Workbooks matter.

Workbooks help users build the skill of checking, improving, and repeating AI work. They turn AI from a one-off output generator into a practice environment.

Explore Workbooks: https://axiom-studio.co/collections/workbooks

7. Turn the result into a reusable asset

The best AI session should not disappear when the chat ends.

If a workflow worked, save it.

Capture:

- the task type

- the context used

- the protocol steps

- the assistant instructions

- the prompt blocks

- the review checklist

- the output format

- what you would improve next time

This creates reusable workflow assets.

Over time, you stop starting from a blank chat box and begin working from a growing library of operating systems.

That is the real productivity gain.

Not faster typing.

Better reuse.

8. Improve the human system

AI workflows are not only about the AI.

They are about the human system around the AI.

The person using the tool still has to:

- define the work

- recognise bad output

- ask better follow-up questions

- understand risk

- decide what matters

- review the final result

- improve the process

That means AI capability is a practice, not just a purchase.

You can buy a powerful tool and still use it badly.

You can also use a simple tool extremely well if your workflow is clear.

The goal is not to become dependent on AI.

The goal is to become better at directing intelligent systems.

How the three Axiom pillars work together

Here is the simple version.

Protocols answer:

What process are we running?

Agent Kits answer:

How should the AI behave while running it?

Workbooks answer:

How do we practise, verify, and improve the way we use it?

You can use each pillar on its own.

But they become stronger together.

A protocol without a good assistant can still produce inconsistent behaviour.

An agent without a workflow can become a very confident assistant with nowhere useful to go.

A workbook without implementation can become learning that never turns into practice.

Together, they create a complete operating stack:

- define the work

- configure the assistant

- run the workflow

- review the output

- practise the skill

- save the system

- improve it next time

That is the architecture behind intelligent work.

A practical example: turning a messy idea into usable work

Imagine you want to create a new offer for your business.

The prompt-first version might be:

"Give me some product ideas for my business."

You might get a list. Some ideas may be useful. Some may be generic. Some may sound like they were assembled from the internet's spare parts drawer.

The workflow version looks different.

First, you define the outcome:

"I need three realistic product ideas that fit my audience, can be delivered within two weeks, and could become repeatable digital assets."

Then you load context:

- current audience

- existing products

- common customer problems

- available skills

- pricing range

- delivery constraints

- examples of offers that fit the brand

Then you choose a protocol:

- idea inventory

- audience fit

- value scoring

- delivery complexity

- risk check

- next-action plan

Then you configure the assistant:

"Act as a practical business analyst. Prioritise realistic offers over exciting but fragile ideas. Challenge assumptions. Score each idea using audience fit, build effort, repeatability, and revenue potential."

Then you run the workflow in stages.

Then you review the output.

Then you turn the best result into a saved workflow for future product development.

That is no longer a random AI chat.

That is a working system.

The takeaway

AI is useful when it helps you think, build, review, and repeat.

It is much less useful when every session starts from nothing and ends in a pile of unverified text.

The future of AI work is not just better prompts.

It is better operating systems.

At Axiom Studio, we are building resources for people who want to use AI with more structure, more control, and more repeatability.

Protocols help define the workflow.

Agent Kits help configure the assistant.

Workbooks help build the practice.

Together, they help turn AI from a clever tool into a dependable part of how work gets done.

Explore the full Axiom Studio library: https://axiom-studio.co/collections/all

Because the blank chat box is not the workflow.

It is only the doorway.